|

Learn More: Crawling web pages with WebSPHINX.Provide a graphical user interface that lets you configure and control a customizable web crawler.WebSPHINX (Website-Specific Processors for HTML INformation eXtraction) is an excellent tool as a Java class library and interactive development environment for web crawlers. WebSPHINX comprises two main parts: the Crawler Workbench and the WebSPHINX class library. Learn More: Getting Started with Norconex HTTP Collector.Highly scalable – Can crawl millions on a single server of average capacity.It can crawl millions of pages on a single server of median capacity. You can use it as you wish- as a full-featured collector or embed it in your own application. Moreover, it works well on any operating system. Norconex is a great tool because it enables you to crawl any kind of web content that you need. If you are looking for open source web crawlers related to enterprise needs, Norconex is what you need. Learn More: Jaunt Web Scraping Tutorial – Quickstart.Customizable caching & content handlers.The library provides a fast, ultra-light headless browser.When it comes to a browser, it does provide web scraping functionality, access to DOM, and control over each HTTP Request/Response but does not support JavaScript. Since Jaunt is a commercial library, it offers diverse kinds of versions, paid as well as free for a monthly download. Jaunt is a unique Java library that helps you in processes pertaining to web scraping, web automation and JSON querying. Learn More: Jsoup HTML parser – Tutorial & examples.Provides a slick API to traverse the HTML DOM tree to get the elements of interest.Developers love it because offers quite a convenient API for extracting and manipulating data, making use of the best of DOM, CSS and jquery-like methods. Jsoup is great as a Java library which helps you navigate the real-world HTML. Learn More: Getting Started with StormCrawler.However, you can also use it for large scale recursive crawls particularly where low latency is needed. StormCrawler stands out as it serves a library and collection of resources that developers can use for building their own crawlers. StormCrawler is also preferred by many for use cases in which the URL to fetch and parse come as streams.

Learn More: Apache Nutch – Step by Step.Robust and scalable – Nutch can run on a cluster of up to 100 machines.

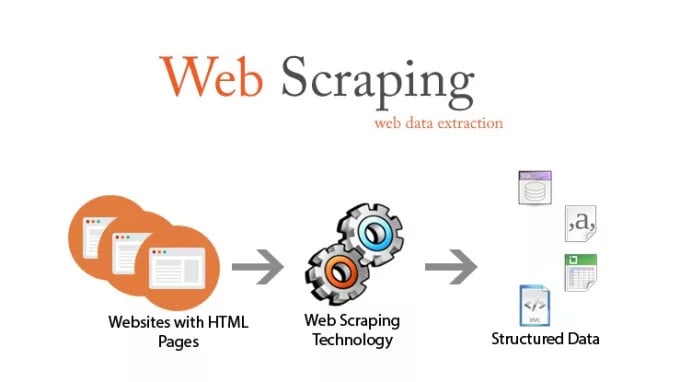

Features like politeness, which obeys robots.txt rules.Highly scalable and relatively feature rich crawler.Moreover, it is also possible to use pluggable indexing for Apache Solr, Elastic Search etc. It’s great to use because it offers varied extensible interfaces such as Parse, Index and Scoring Filter’s custom implementations such as Apache Tika for parsing. Here’s a list of best java web scraping/crawling libraries which can help you to crawl and scrape the data you want from the Internet.Īpache Nutch is one of the most efficient and popular open source web crawler software projects. As businesses rely heavily on data for decision making, web scraping has, in turn, grown in significance. However, as data needs to be collated from different sources, it is even more important to leverage web scraping as it can make this entire exercise quite easy and hassle-free.Īs information is scattered all over the digital space in the form of news, social media posts, images on Instagram, articles, e-commerce sites etc., web scraping is the most efficient way to keep an eye on the big picture and derive business insights that can propel your enterprise. In this context, java web scraping/crawling libraries can come in quite handy. In other words, you can extract large quantities of data from disparate sources.ĭata has always been important but of late, businesses have begun to use data in order to make business decisions. As it is automated, there’s no upper limit to how much data you can extract.

In any case, web scraping and crawling enables this process of fetching the data in an easy and automated fashion. At times, it is not possible for technical reasons. Since data is growing at a fast clip on the web, it is not possible to manually copy and paste it. It requires downloading and parsing the HTML code in order to scrape the data that you require. The data does not necessarily have to be in the form of text, it could be images, tables, audio or video.

Web scraping or crawling is the process of extracting data from any website.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed